Assistive Access Supports Users with Cognitive Disabilities

Assistive Access uses innovations in design to distill apps and experiences to their essential features in order to lighten cognitive load. The feature reflects feedback from people with cognitive disabilities and their trusted supporters — focusing on the activities they enjoy — and that are foundational to iPhone and iPad: connecting with loved ones, capturing and enjoying photos, and listening to music.

Assistive Access uses innovations in design to distill apps and experiences to their essential features in order to lighten cognitive load. The feature reflects feedback from people with cognitive disabilities and their trusted supporters — focusing on the activities they enjoy — and that are foundational to iPhone and iPad: connecting with loved ones, capturing and enjoying photos, and listening to music.Live Speech and Personal Voice Advance Speech Accessibility

Detection Mode in Magnifier Introduces Point and Speak for Users Who Are Blind or Have Low Vision

- Deaf or hard-of-hearing users can pair Made for iPhone hearing devices directly to Mac and customize them for their hearing comfort.

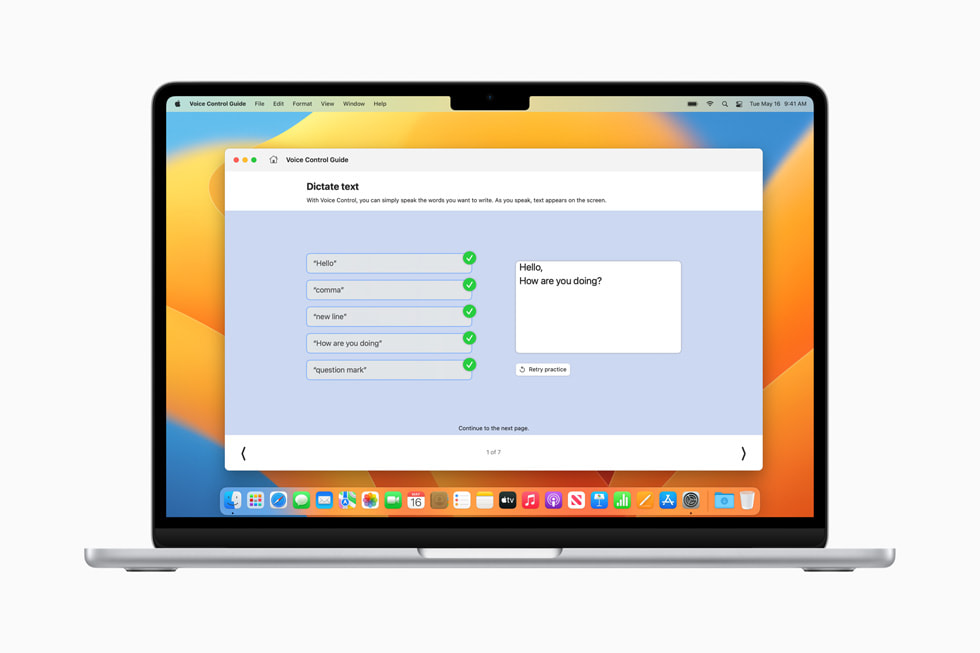

- Voice Control adds phonetic suggestions for text editing so users who type with their voice can choose the right word out of several that might sound alike, like “do,” “due” and “dew.” Additionally, with Voice Control Guide, users can learn tips and tricks about using voice commands as an alternative to touch and typing across iPhone, iPad and Mac.

- Users with physical and motor disabilities who use Switch Control can turn any switch into a virtual game controller to play their favorite games on iPhone and iPad.

- For users with low vision, Text Size is now easier to adjust across Mac apps such as Finder, Messages, Mail, Calendar and Notes.

- Users who are sensitive to rapid animations can automatically pause images with moving elements, such as GIFs, in Messages and Safari.

- For VoiceOver users, Siri voices sound natural and expressive even at high rates of speech feedback; users can also customize the rate at which Siri speaks to them, with options ranging from 0.8x to 2x.

Are you looking forward to accessing these new features? Share with us on Facebook, Twitter and Instagram.